Cite report

IEA (2022), Implementing a Long-Term Energy Policy Planning Process for Azerbaijan: A Roadmap, IEA, Paris https://www.iea.org/reports/implementing-a-long-term-energy-policy-planning-process-for-azerbaijan-a-roadmap, Licence: CC BY 4.0

Report options

Concrete steps for Azerbaijan

Setting a timeline

Estimating the timeline for Azerbaijan to develop a long-term energy strategy is complex, as there still remains some uncertainty on the availability of demand-side data. This is a multi-year process for all countries, and really depends on where the government is starting from. If certain key elements are in place, such as survey data, this process can take significantly less time. However, planning is key, and governments can begin to develop strategic goals at the same time as they commence the statistical survey process.

Designing a survey: Steps and timing

As has been noted above, the availability of quality energy statistics is fundamental to policy planning and building energy models. The use of existing data should be maximised, including administrative data, which may be overlooked. Often, however additional data – specifically on end uses of energy – will need to be collected using statistical surveys. In the case of Azerbaijan, the results of the household energy consumption survey from 2018 should be very useful in aiding policymakers who want to make targets for the residential sector. However, reconducting the survey at regular intervals (every five years ideally) is necessary to provide a reliable baseline. Thus it is important to make sure that the time necessary to run those surveys is added to the overall long-term policy planning timeline. An outline of the steps is provided below. The timing is based on household surveys, but this could also apply to business surveys.

It is difficult to predict exactly how much time will be needed to run a survey, but it is likely to take roughly six months, or nine if a pilot survey is added (this is a recommended step in order to validate whether the survey design has been successful).

Designing a survey

|

Step |

Design Phase |

|---|---|

|

Design questions

|

Data collection

|

|

Questionnaire and methodology

Census or survey (which sampling method)? Piloting

Finalising the questionnaire |

|

|

Data quality – How to deal with

Validation/Comparability of the data with other surveys Data protection: Confidentiality and sensitivity of the data provided Validating data, possible elements to be used are:

Approach to grossing up survey results |

Processing results

|

|

Plans for dissemination |

Dissemination

|

A single survey can be useful, but limited in its impact. Instead, policy makers and statisticians should plan for and put resources towards a series of surveys, ideally every two to three years. This way changes in energy use can be properly monitored. It will always be better to have two small surveys in separate years than one large one-off survey.

Timing to build a 2050 calculator

As discussed, using a 2050-type calculator is just one approach to looking at and building scenarios, but considering its use from a time perspective helps to better understand and assess the whole timeline for developing a long-term plan.

Building a calculator ready for launch generally takes around a year, but that will depend on the availability of data. Work can also begin while data are being collected, as these can be added later. Typically six months is needed for the design and discussion on sectors and levels, as well as the building of the Excel sheets. The rest of the time is spent on planning and communication. Best practice is to launch the calculator as soon as possible in beta mode in order to allow testing and feedback on the calculator. Typically a first version of the calculator does not include costs, given that data on costs can often be restricted or very hard to access, but costs are often built into later versions. Countries should aim to have a minimum viable model that can be adapted in later versions to suit specific needs and answer more complex questions.

Monitoring and evaluation

As discussed, a key role for statisticians in the policy-making process is to effectively monitor the outcome of the policy. This is essential, as monitoring and evaluation should be seen as key features of the policy-making process. Monitoring and evaluation are linked, but they are fundamentally still separate processes.

Monitoring provides headline data on policy performance, working to answer the question, “what happens as a result of the policy?” Meanwhile, evaluation provides an understanding of what is happening or has happened, why it happened, and what can be done about it. Thus, the evaluation stage covers impact, economic and process elements.

Monitoring and evaluation are not stages that happen only at the end of the policy, but need to happen continuously throughout the policy-making and implementation process.

Before the launch

Key questions:

- How will the policy work?

- Will it be worth it?

- What baseline data are needed to monitor impact?

Planning is key:

- Convene policy makers and analysts (statisticians, economists, social researchers) to work together from the beginning.

- Review the evidence, understand whether there is a policy gap or insufficient data.

- Map the policy, understand how it is intended to work and set out the benefits.

- Design and prioritise evaluation projects within the budget envelope.

- Budget adequate resources for the design and implementation of the policy.

Determine what the policy will ideally deliver:

- Assess what has worked in similar contexts and examine global best practices.

- Assess cost-effectiveness: whether it is cost-effective:

- Will the costs of the policy be less than the savings incurred over the life of the policy? (How long will savings last)?

- Gather evidence for savings and benefits from other policies, expert opinions and other countries.

- Is the required saving achievable?

- Lay out how the policy will be implemented.

- Divide responsibilities; how likely is it that target stakeholders will act on the policy?

- Assess whether the policy administrators can implement it.

Map the benefits of the policy:

- Ensure clarity regarding outputs and outcomes.

- Identify areas of risk and uncertainties.

- Map the benefits to determine:

- What to measure (outcomes, outputs).

- What assumptions need to be tested.

- Where priorities and difficulties/challenges lie (e.g. risk, uncertainty).

Identify the initial necessary work on data and statistics in relation to the policy:

- What data are needed for monitoring and how they can be collected.

- How to produce a baseline (that change is measured against).

- How to pilot the policy and/or undertake pre‑launch research.

During delivery

Key questions:

- Is it working? For whom?

- Why/how?

- Unforeseen events

After a successful pre‑planning stage, it will be time to launch the policy. However, in reality the time for pre‑planning may be limited by the political desire to launch a policy by a certain date. During this phase of the policy cycle the focus on monitoring and evaluation must switch to what is actually happening.

- Produce reliable evidence – what is working, in what context, for whom, and how? What is not?

- Understand if the anticipated benefits and outcomes are happening.

- Produce evidence-based recommendations to increase chance of policy success.

In order to be effective, this stage has to use very timely data. Most likely this would include administrative records from the delivery agents, who are often part of government operating the policy. Survey data will not be sufficiently timely to be able to understand what is happening at the present time.

However, if the policy intervention is a choice by the government to promote something that is not yet happening, it is likely to require some form of financial support. Therefore, these records should exist (for accounting or audit purposes). Ideally statisticians and policy makers will have used the planning stages to discuss accessing these records for statistical reports, as well as designing what information will be recorded. It is very difficult to change the recording system once the policy is up and running.

Evidence gathered as the policy is running is vital to ensure that the overall policy will be a success. Good information can allow for changes in policies, such as potentially raising or lowering incentives depending on if take-up is lower or higher than planned.

After delivery

Key questions:

- Did it work?

- How and why did it work?

- Was it worth it?

- Who gained?

- Were objectives met?

The after-delivery stage does not necessarily focus only on when the policy has concluded, although it may. It may also just refer to a planned review of the policy, and having such reviews can be very useful as part of the overall policy cycle. The key questions being considered at the review or final stage are what has happened and why. For example:

- What has been achieved and at what cost?

- How efficient was implementation and delivery?

- How do costs and benefits compare with other policies targeting the same outcomes?

- Who paid the costs and who benefited? (and other distributional impacts)

To properly understand the impact of a policy requires a counterfactual i.e. “what would have happened if you hadn’t implemented the policy?” Of course, in reality this is very difficult, and while the best way to measure it is by having a control group who did not benefit from the policy to compare against, this is very hard to establish. The United Kingdom’s work on its National Energy Efficiency Data-Framework does provide an example.

However, in reality when effective evaluations are done, they are normally carried out against a modelled counterfactual – i.e. what situation was considered likely without the policy – which was most likely done as part of the planning for the policy. In these instances, it is worth looking at the initial model as there may have been changes in the wider national or global economy that were not assumed (for example a large rise or fall in the price of oil) and so modelling again with these changes taken into account will be useful.

Monitoring an evaluation may seem like a large burden, given the desire to move ahead and get a policy up and running. However, it is essential in order to ensure that the planning process for the policy is properly thought through, that changes to the policy can be made if needed and that good evidence can be produced to show what impact the policy had.

Key performance indicators

A sensible part of developing a long-term energy strategy is to agree on some key performance indicators (KPIs) that can be used to track the overall development of the energy situation. These will likely be different from the specific goals of individual policies that are covered by monitoring, as KPIs will need to look at all the elements of energy supply and demand, but often at a headline level.

It can be challenging to choose which KPIs to use, but as a guide, key indicators should reflect:

- Overall policy needs and strategy (however, KPIs can differ internationally to nationally and nationally to regionally).

- Consistent data of known quality.

- A baseline to measure change.

- A time series to be most effective, so it is vital to plan data collection on a regular basis.

- International definitions for consistency.

There are many examples of indicator sets globally and a good place to start is the IEA Scoreboard, which was developed in 2009. However, it is worth noting that these indicators were developed before the significant growth in non-hydro renewable sources used for electricity generation and the general expansion of energy end-use data. This just emphasizes that any major changes in the national energy landscape may very well require developing new KPIs to correctly track progress.

In designing a set of indicators, thought needs to be applied to the choice of data for both the numerator (top) and denominator (bottom) and to ensure they provide a sensible and meaningful assessment. Following are a few examples.

Historically, a leading indicator of energy system diversity has been the share of each fuel as part of TES. While still a useful indicator, it is not the most reflective measure of the share of renewables, for example, due to the established international accounting rules of energy (as set out in the International Recommendations for Energy Statistics). Instead, for renewables it is likely to be more useful to look at their share in electricity generation, and here actual generation will be far more useful than share of capacity given the different capacity (or load) factors.

Another question is whether to use TES or TFC as a denominator. There can be good arguments made for choosing either, but it is important to understand why these numbers might change and what impact that might have on an indicator using them. One example is to think of a country which imports electricity from a neighbouring country, which is produced by hydropower, but has fossil fuel generation backup for years when hydro generation is low and thus its imports are limited. If everything else is the same, TES will be higher in years when electricity has to be generated in the country, but TFC will be unchanged, as the demand for energy is independent of how it is produced. So often using TFC and focusing on energy demand in the country will provide more informative and stable indicators.

A final example is to look at energy efficiency. Often, in the absence of anything else, this is measured via an economy-level intensity index e.g. GDP/energy consumption. However, this is only at best a proxy, as the value of this indicator will change for a number of reasons, including as a result of changes in the scope of industries in a country. A country growing its service sector but seeing declines in its manufacturing sector is very likely to see its energy intensity indicator fall, even if there has been no effective improvement in energy efficiency, as the service sector on average uses less energy per unit of GDP produced.

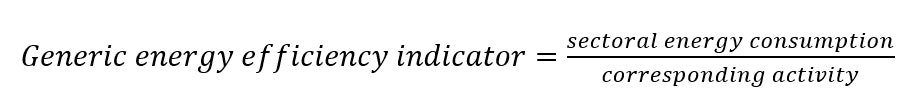

Therefore for energy efficiency it is more representative to link sectoral energy consumption with the activity that energy is used for, so a generic sectoral indicator (intensity) will look like:

Examples of activities for main sectors

|

Sector |

Activity |

|---|---|

|

Overall |

GDP Population |

|

Residential |

Population Floor area Number of appliances |

|

Service (ideally by category) |

Value added Number of employees Floor area |

|

Transport |

Passenger-kilometre |

|

Industry (by subsector) |

Value added |

More information on energy efficiency indicators can be found in the IEA’s energy efficiency indicator manual, which provides guidance on how to collect the data needed for indicators and includes a compilation of over 170 existing practices from across the world (IEA, 2014).

A timeline for Azerbaijan’s energy policy-making process

Timeline through 2030

Conclusion

This report set out a path for a country to develop a long-term energy strategy to help understand and plan in order to meet the energy priorities. Should Azerbaijan choose to develop a long-term approach to energy, then following this broad outline is likely to be advantageous. Many other countries have embarked on long-term planning, knowing that to create change a form of government action is needed. This needs to be understood by people and businesses, so that they in turn can take the actions required.

Across the world, countries are developing longer-term strategies to accelerate clean energy transitions, to ensure energy security and -- for some -- to maximise the potential of their natural assets. Should Azerbaijan wish to do the same, then ensuring it has comprehensive energy statistics covering the entirety of its energy supply and use will be a vital step. However, those data need to be made available to all and that may require stronger co‑operation across government ministries.

Expanding energy statistics, especially on the demand side, will allow Azerbaijan, like other countries, to embark on energy modelling to better understand how best to achieve its long-term aims.

Ultimately the choice to follow this pathway, as outlined here, is a choice for Azerbaijan. The country has natural assets, both fossil and renewable, that are available to be harnessed and used effectively to support broader goals of energy security, transition and prosperity. A long-term, strategic approach to doing so will ultimately prove beneficial.